Month: November 2016

The Future of Lip Reading

Published Date: November 24, 2016 Leave a Comment on The Future of Lip Reading

Will your next hearing aids have cameras? New research on lip reading by artificial intelligence suggests that, and more is on the way.

Apple’s Agenda

Published Date: November 11, 2016 Leave a Comment on Apple’s Agenda

Your hearing aids and your phone. Apple and Android are making the connection.

Posted in Uncategorized

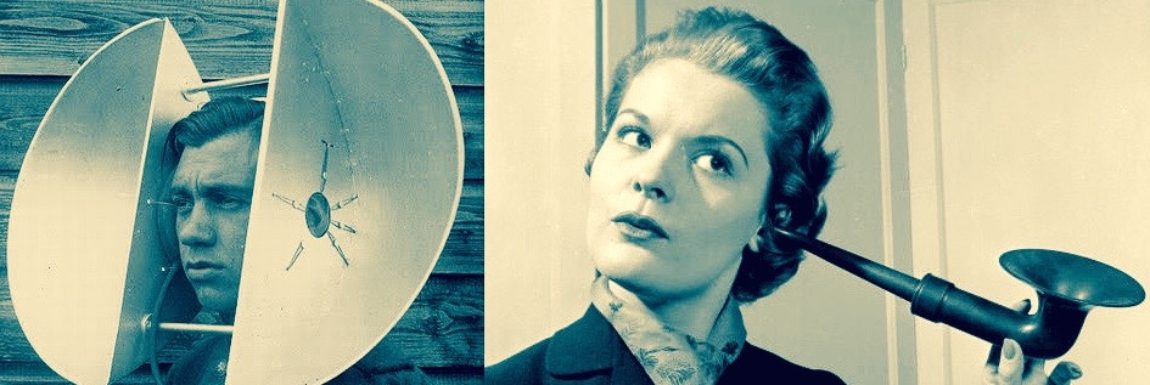

Steampunk Hearing Aid Art

Published Date: November 4, 2016 Leave a Comment on Steampunk Hearing Aid Art

“Steampunk is a joyous fantasy of the past, allowing us to revel in a nostalgia for what never was. – George Mann

A Deaf Man in Paris

Published Date: November 1, 2016 Leave a Comment on A Deaf Man in Paris

A rendezvous with one of the world’s leading geneticists who first identified the hereditary causes of hearing loss.